In June 2011, President Barack Obama’s Office of Science and Technology Policy released a white paper called “Materials Genome Initiative for Global Competitiveness” that got the attention of the materials science community.1

Credit: N. Hanacek/NIST

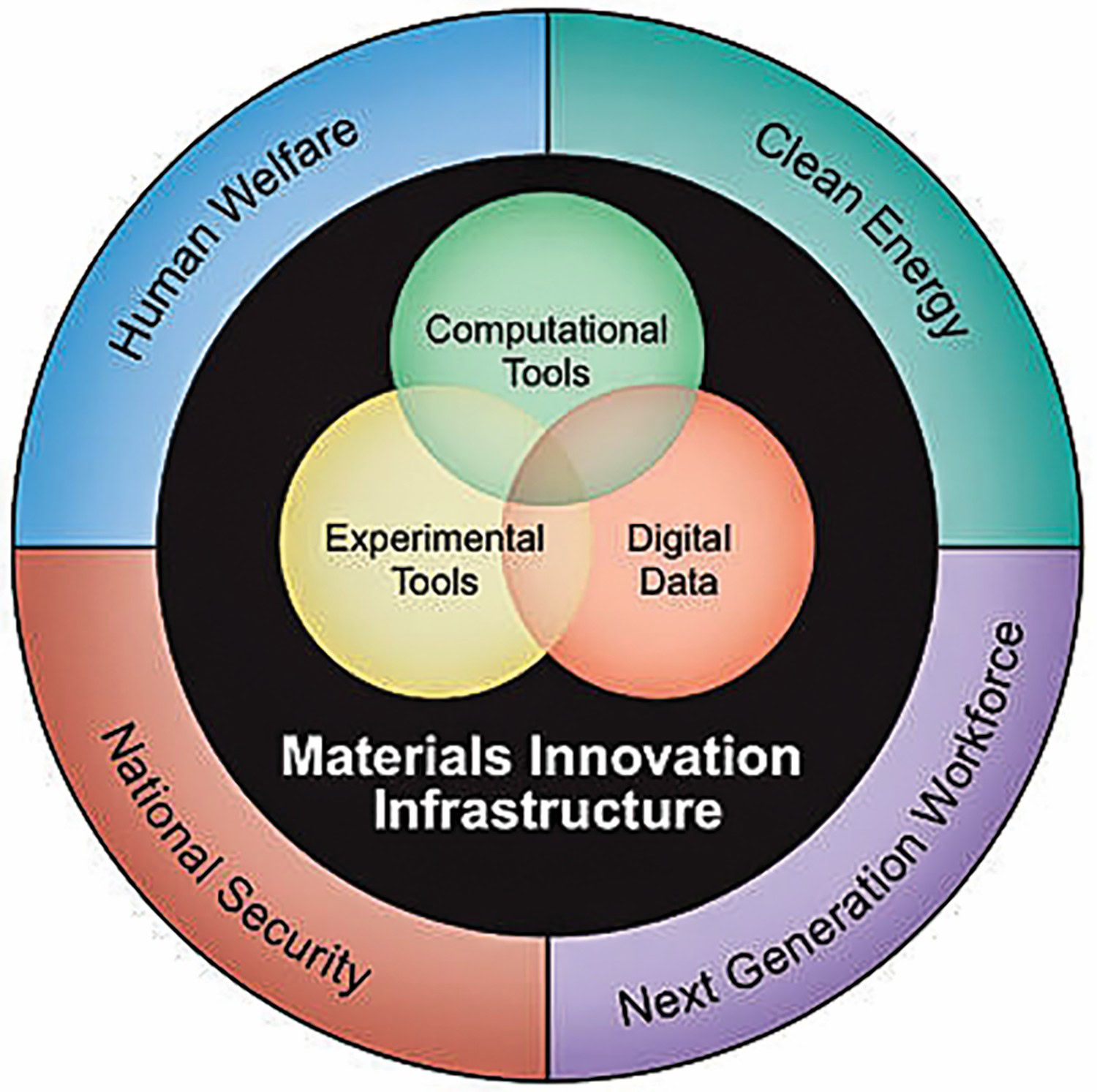

The goal of the MGI was to reduce the time for materials development-to-deployment by 50%, or about 10 years—and for less cost. The MGI was motivated by a vision to accelerate the pace of new materials development to address urgent national challenges in clean energy, national security, and human welfare. Those developing the MGI concept to catalyze quicker lab-to-market products using new materials understood that success would require building an infrastructure of computational tools, experimental tools, collaborative networks, and digital data.

The white paper was prepared by an ad hoc group of the United States National Science and Technology Council (NSTC) with representation from most federal agencies that fund significant materials research, including several offices each from Department of Energy, Department of Defense, National Science Foundation, and Department of Commerce. A four-part strategic plan drove the first decade of MGI:

- Equip the next-generation materials workforce;

- Enable a paradigm shift in materials development;

- Integrate experiments, computation, and theory; and

- Facilitate access to materials data.

NSTC established the Subcommittee of the Materials Genome Initiative, which maintains a website of interagency activities and resources pertaining to the MGI (https://www.mgi.gov). Since 2011, the MGI grew to include more federal agencies and broader participation from the original agencies. The subcommittee is working on a new strategic plan to guide the MGI into its second decade and leverage the significant advances of the first decade.

James Warren

As the MGI stands on the threshold of a new decade, ACerS marks this milestone with an interview with James Warren, director of the NIST Materials Genome Program. Warren was part of the 2010 ad hoc interagency committee that produced the original MGI whitepaper. Since then, he has tirelessly advocated for the MGI, working with government, academic, and industry stakeholders to build the infrastructure to realize the vision set 10 years ago. Warren talks about the genesis of the MGI, the journey of the first 10 years, and what the future holds.

This interview is condensed from a longer conversation, which will be published as an ACerS Ceramic Tech Chat podcast on June 9, 2021. Find it at https://ceramics.org/ceramic-tech-chat.

Q: The Materials Genome Initiative is 10 years old. What drove the idea behind the MGI and how did the materials community react to the white paper?

A: The MGI, when it was rolled out, was a collection of ideas that were not terribly new. There had been a large number of reports over the last few decades that preceded the rollout looking at how one could accelerate the design, discovery, and deployment of new materials faster by tightly integrating modeling with experiment and better data management.

These ideas were starting to bear enormous fruit. The early- to mid-2000s started to see reports coming out calling for integrated computational materials engineering. A lot of the database efforts in the computational regime, mostly around density functional theory, were yielding true payoffs. And so the idea for the initiative had been sort of bubbling in the firmament of materials science and related disciplines like chemistry.

When the Obama Administration approached the National Science Technology Council saying, “Hey, we think something like a materials genome initiative would be a good idea,” there were a lot of people in government who thought, “Yes, we can make that work.”

And, I am laughing now because, of course, the one thing that we did not love was the name!

I think there was a great deal of delight over a major initiative in materials coming out of the government. The only other one really at that point was a nanotechnology initiative, which was very substantial. The notion that there would be something that went beyond nano and also had an emphasis on computation was very exciting.

Q: One of the goals of the MGI right from the start was to build an infrastructure that would support its goals. What progress has been made on building some of these computational tools, the experimental tools, the collaborative networks, the digital databases, and data access that was part of the vision?

A: The MGI is a bit sneaky compared to a lot of these other initiatives because the focus is really on the evolution of this infrastructure. In that sense it is a “meta” initiative. That is, we are trying to build the things that allow us to make the materials. It is a little bit abstract.

A lot of these tools are about managing data, or how you do a computation. It’s not like we want to make the next great battery. We want to make the technologies that allow somebody to make the next great battery.

In terms of specific infrastructure, they are all over the place. One of the marquis examples is the DOE’s Materials Project. There are a lot more resources, like the Materials Data Facility and Materials Commons, which NIST and the DOE fund, respectively, which are more sort of generic data hosting efforts that have made a great deal of progress.

There are a lot of efforts at NIST and at other places trying to think about better ways of curating and managing data so that other people can find that data and reuse that data in ways that are more efficient and robust.

How do you merge data sets? How do you gain extra value from that information? There is a tremendous amount of effort. You mentioned software tools and computational tools. We fund a lot of these sustainable software efforts, which the MGI is happy to build upon for computational research in predictive materials research.

And then there is also this whole community building activity. And that is almost a whole separate conversation about how we engage. (See sidebar: Materials Research Data Alliance)

Q: You talked about the MGI predating or anticipating some of the big advances in artificial intelligence, machine learning, and deep learning. Do you think those changes were coming anyhow or did the MGI help push them forward?

A: I don’t want to take too much credit! In other words, I think they would have happened. And I think that the MGI is a framework for understanding how to accelerate materials discovery, design deployment, etc. Essentially all AI is a system to use data to develop a model. Well, the MGI is largely about taking advantage of modeling and integrating with experiment to accelerate materials discovery. So, AI as a paradigm is just another suite of tools to allow us to do that acceleration.

Plus, the MGI is to a large extent about data management. AI needs data. The MGI also is poised to provide the raw materials for an AI effort and you have to make the MGI data “AI ready.” And the AI itself can be integral to an MGI effort. It is that twofold aspect that I think is the overlap. I think the MGI provides an incredibly useful template for articulating what can be done and can also be integrated with the broader efforts.

Q: What kind of impact has the MGI had on data-to-data driven discovery of ceramic and glass materials?

A: Can I point to some broad-base answers to that question? Probably not. Can I find superb articles of recent provenance that do precisely what you are talking about? Yeah, sure. One of my colleagues Jason Hattrick-Simpers and collaborators have a very nice paper that came out a couple of years ago. It was about a glassy metal they discovered using a combination of high-throughput experiment and machine learning to find and then to fabricate.

That is just one example. The number of people now who are trying to use these techniques is large because it is clear that for materials discovery, anything that can increase your efficiency is something worth exploring. Adding robotics and intelligent systems to help you decide which experiments to do next is where a lot of the action is on this front.

I do not want to sell theory short because I am a theorist. One of the fun challenges, and where you will see a lot of the intellectual energy going right now, is how do you fuse classical theory and predictive models using AI techniques, which are purely data driven. How do you merge those two efforts? There are a lot of smart people thinking about it, but it is not like there is a canonical known answer. And whether there will be eventually, I do not think we know the answer to that.

Q: Do you think we will ever be able to design a material for an application from first principles?

A: If you are talking to somebody who is trying to make a semiconductor material for application in a nanoscale electronics, we are already doing that. We are already using quantum mechanics and designing materials and manufacturing.

In those cases, you are effectively using modern technology to build materials atom by atom. And there you can immediately see the connection between some of these tremendously fundamental computations and the material itself. The materials are existing at the nanoscale or smaller even. The wires and the vias in microelectronics, these are now down to three nanometers. The process is just mind boggling. I can guarantee that semiconductor companies are modeling these things all the way down. In other words, they are using MGI techniques. They have to be, right? The effects of the sizes are quantum. You know the leakage issues that they are suffering have got to be all there.

As for structural materials? If I told you that you needed to design a plane wing or build the alloy for a plane wing using molecular beam epitaxy, you would say “I can’t afford that. It is not a good idea.” So instead you take the material, melt it in a bucket, and pour it in a mold. You are trying to make mass quantities and you have to make compromises. This processing technique is going to end up with a mess inside that system, a mess that you probably would rather not have in there. But you are going to have to live with it. [Integrated computational materials engineering] is about managing the costs by being able to predict these internal structures.

Am I ever going to be able to do a first principles computation of a turbine blade? The answer is no, never. You are going to have to make all sorts of compromises and intermediate calculations now.

I’ve dreamt for 30 years that computation would eventually be good at internal pattern recognition and can do its own coarse graining. You could imagine doing a calculation at a level, then it [AI] finds a pattern and does the next order calculation at the next pattern level up.

If you look at what AI is doing right now, it is kind of like that. It is finding patterns in systems and effectively trying to coarse grain. That is how you can get these predictions out. So I may have to eat my words where I said “never.” It could be again in my lifetime that we see computations that can start with Schrödinger’s equation, and some few other things, and really make macroscale calculations or predictions.

Credit: NIST

Q: We have mostly talked about basic science and research. How do the MGI principles apply to engineering situations? For example, QuantumScape [San Jose, Calif.] recently announced development of new ceramic electrolyte materials for high-density, solid-state lithium-ion batteries. While the company did not reveal their R&D methodology, how could some of the ideas we have discussed have been used?

A: It turns out that the company [QuantumScape] has an explicitly MGI approach. That is, they are doing computation to predict the materials and then down selecting and doing real experiments on a much-reduced number of potential compounds. And if they are not already, they are going to be using AI. I can guarantee it.

Companies are trying to use these techniques because they can actually make money and make new materials for their designs. A major aerospace company I am aware of is now doing simultaneous design of new materials and the rocket engines that they are building. I think they got the materials development insertion time down to 18 months from what used to be about 30 years. It is completely, unbelievably mind boggling.

This is the goal of the MGI. We are really trying to make it easier for people, companies, researchers, whomever, to use these ideas and tools. The government is funding this initiative to lower the barrier to entry for these ideas so that more manufacturers can do it with lower resources [initial upfront costs] so they can see the return on the investment quicker. This will help the billion-dollar revenue companies, and it also will allow more players in the field.

So you asked me about engineering impact; that is what this is about. It is already demonstrable.

Q: What are some of the barriers to realizing the MGI’s full potential, including workforce development needs?

A: Workforce development is a big piece of this. You have to have the people that can use the infrastructure to reap the benefits of these developments. To make that happen, there have been a number of efforts, and there are more and more all the time. Another wonderful benefit of the AI revolution is more interest in that field. Because of that, there are programs that are springing up in materials design and the application of AI to materials design at a number of universities.

I think you are going to see materials departments, chemistry departments, lots of different kinds of engineering, any place there are materials looking at these things and trying to figure out ways to de-silo the AI efforts, which mostly have been taught by electrical engineering or computer science. It is just going to become another tool.

Computational work is part of most undergraduate and graduate training, including some undergraduate programs in materials. The same thing is going to be true for the MGI-style design. It would be crazy not to.

Q: What does the future of the MGI look like, as it turns that corner of 10 years and looks to the future?

A: At least two ideas are in the front of my mind. One is this deeper integration with manufacturers. We need to figure out the engagement models and the discussions needed to get them these tools. We must figure out what the barriers are to adoption, what are their incentive problems. It’s complicated, and it’s very company dependent. A big focus of the MGI going forward is getting us all the way out on the TRL [technology readiness level] scale.

Beyond that, I want to see a lot more focus on the integration piece. It always has been at the heart, but there are a lot of gaps. The distance between the gaps is now starting to become small enough that we can really start to knit this thing together. And as we start to see more interoperation of various resources and scales, I think this is going to start to accelerate the MGI.

In the Human Genome Project, there were some very nonlinear moments in how the cost of sequencing changed. It started at nearly a billion dollars for the first one, and now you do your cat for 100 bucks or something like that.

And I would imagine that we are going to see similar kinds of changes, where suddenly something that is going to drive the cost of certain pieces way down and then you start to attack some other element in the structure. As people start to see the value proposition in these kinds of approaches, it becomes obvious to people and we start to see real disruptive rapid change in the way that things get done. There is no question in my mind that materials science is likely one of the most lucrative aspects of the application of AI because you are going to make stuff that people want. It is really that simple.

The economic potential is so enormous that I do not think most companies have been able to really grapple with it yet, although you’re starting to see it. The capacity to make things more cheaply and easily, which is what the MGI is about, has got to be at the center.

Q: What role do you see the federal agencies having for the future of MGI?

A: We are trying to be very careful to figure out what is the government’s role. Certainly, the government’s role is not to say that this kind of research is important, without understanding what the community thinks is important. All the agencies have missions, and how do we fund the research that will meet our missions? We will think about the technologies there and also understand what the industry needs so that we are there for them. And if that means understanding AI and how everyone can use it more easily and more intelligently, then that’s where we’ll go.

So then the question might be when does the government step back? And usually, the answer is when the private sector stepped in and solved the problem so it’s not a precompetitive situation any longer. That’s great. That’s called winning, right?

In a certain sense, you could say the MGI would be done when everyone says “yeah, that is the way we do things” and “we have all these tools at our disposal.”

Read more: “Materials Research Data Alliance—MaRDA“

Cite this article

E. De Guire, “Materials Genome Initiative 10 years later: An interview with James Warren,” Am. Ceram. Soc. Bull. 2021, 100(5): 24–28.

Issue

Category

- Electronics

- Manufacturing

Article References

1“Materials Genome Initiative for Global Competitiveness,” White House Office of Science and Technology Policy, June 2011. https://www.mgi.gov/sites/default/files/documents/materials_genome_initiative-final.pdf (Accessed April 27, 2021)

Related Articles

Bulletin Features

The nonferrous metals market: Supply and regulatory pressures inspire strategies for a resilient future

Nonferrous metals serve foundational roles in the electrification, renewable energy, and digital transformation. Nonferrous metals are metals that do not contain iron in significant amounts. These metals typically are nonmagnetic, corrosion resistant, electrically and thermally conductive, and lightweight, making them ideal for applications in the emerging markets mentioned above. Even…

Market Insights

Industrial digitalization: ‘Smart’ operations can improve worker safety and well-being in high-temperature environments

Heavy industry is the backbone of economies around the world, critical to automotive production, construction, the energy sector, and everything in between. But many heavy industries are facing worker shortages. There are more than 400,000 open manufacturing jobs in the United States, according to the Bureau of Labor Statistics.1 With…

Market Insights

‘Fail fast’ manufacturing: How disciplined experimentation strengthens, not threatens, quality

In manufacturing, few phrases raise eyebrows faster than “fail fast.” In the startup world, this business strategy is celebrated as a sign of agility. On a ceramic manufacturing floor, it can sound careless or even dangerous. In manufacturing, few phrases raise eyebrows faster than “fail fast.” In the startup world,…